Humane HITL

Humans intervene on purpose

“Just put a human in the loop.”

When you're building an app or enterprise system that centers on an LLM this phrase is usually thrown out as a mic drop. Leadership breathes a sigh of relief when it's suggested because it means “a real human with an analog brain is going to make the important decisions.” It usually shows up at the end of a risk conversation, right when things start getting uncomfortable. Liability? Human in the loop. Safety? Human in the loop. Hallucinations?

While Human In The Loop (HITL) designs are necessary, they're increasingly being used as an easy escape hatch. Except, when that escape hatch is opened, a digital system's failures are dumped onto a human. Instead of building responsible controls and policy on the digital side, we insert an analog human late in the workflow and treat that as governance.

You may have found yourself in a similar situation, or maybe even found yourself at the wrong end of a bad decision because of HITL. You might feel like Jim Carrey's character, Dick, from Fun With Dick and Jane.

In the movie, Dick is suddenly promoted into an executive leadership role. He's ecstatic, telling his partner they can go on that expensive vacation they've always wanted to take. He even mentions they can be a one-income family now with his big, fancy promotion. What he couldn't know is that the CEO dumped his stock over the past year, and he is now (and was always going to be) the fall guy for the company. Dick walks onstage as the face of decisions he never made, defending a system he never designed, absorbing consequences he never agreed to own.

Dick suffered from “Responsibility Without Authority.” Dick was left holding the bag after a long line of irresponsible decisions. These decisions were made by the people above him who actually had authority and agency when they architected the company. "Responsibility Without Authority" is also a HITL failure mode. Of the many ways HITL can fail, here are three of the most common:

- Responsibility Without Authority

When humans lack the authority, knowledge, and training to confidently make the decisions the system is asking them to make - Alarm Fatigue

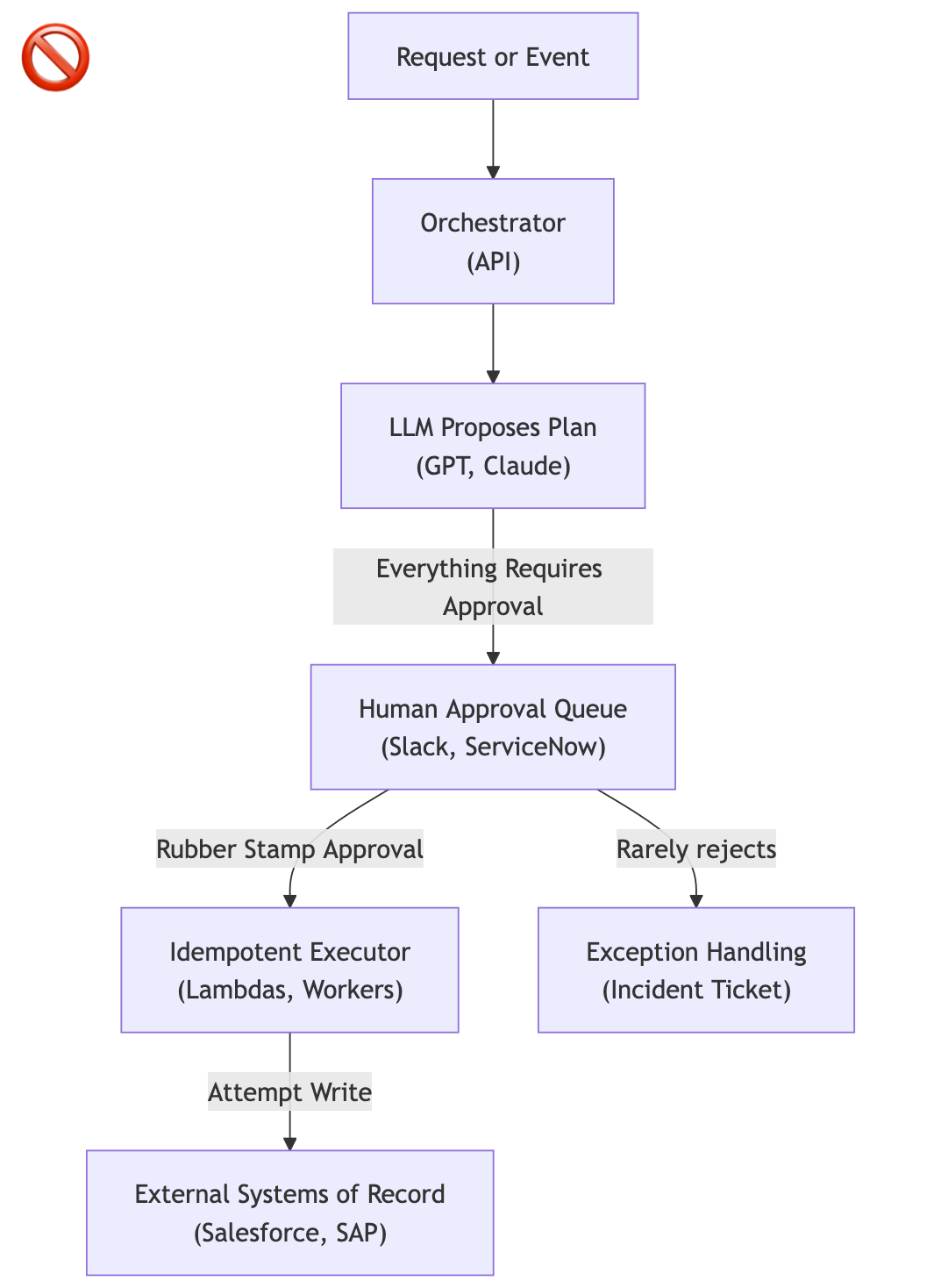

When humans are flustered by rapid frequency decisions that overwhelm their ability to disposition - Rubber-Stamp Automation

When humans are just a rubber stamp in turbo mode

As AI systems are more widely adopted and operate at higher volume, misusing HITL degrades outcomes and unfairly transfers systemic risks to people. Let's explore these failure modes a little deeper:

Responsibility Without Authority

In this failure mode humans are put in the rough position to approve suggestions made upstream by systems they did not design or control. Furthermore, the human is not properly trained to understand the decisions that were made to produce the suggestion they're approving. For example, the human has likely never read the system prompt, they just have some vague idea of what the system is somehow proposing and why.

In this failure mode, accountability lands on the human not because they had any real control, but simply because they were designed in to the architecture as the ultimate bottleneck in a long chain of esoteric logic.

Alarm Fatigue

I first learned about alarm fatigue when I was working in the oil fields ~12 years ago. I was not shocked that alarm fatigue existed; the concept makes sense if you spend any amount of time in a chaotic control room. I was shocked by how quickly it set in. The company men in the room had a hard time distinguishing the alarms that mattered (H2S) from the alarms that didn't (relatively speaking, literally anything else). They just wanted to "shut that thing up," so many times alarms were silenced without a second thought. The symptoms were addressed instead of the disease.

Similarly, in HITL situations, asking a human to do high-frequency decision making overwhelms their attention. High volume, partial context, and time pressure create a system where oversight becomes muscle memory. When an actual erroneous, strange, or downright harmful request is made from the LLM, it's treated like all of the other requests without a second thought. Alarm fatigue erodes the exact safeguard HITL is supposed to deliver.

Rubber-Stamp Automation

This failure mode usually happens long before AI is introduced to the system. This failure mode happens when the process was already ceremonial. In this scenario the system around HITL makes the human a faster rubber stamp. Good metric go up, boss happy. But what have you accomplished, really?

Now that we've explored the failure modes, when does HITL make sense?

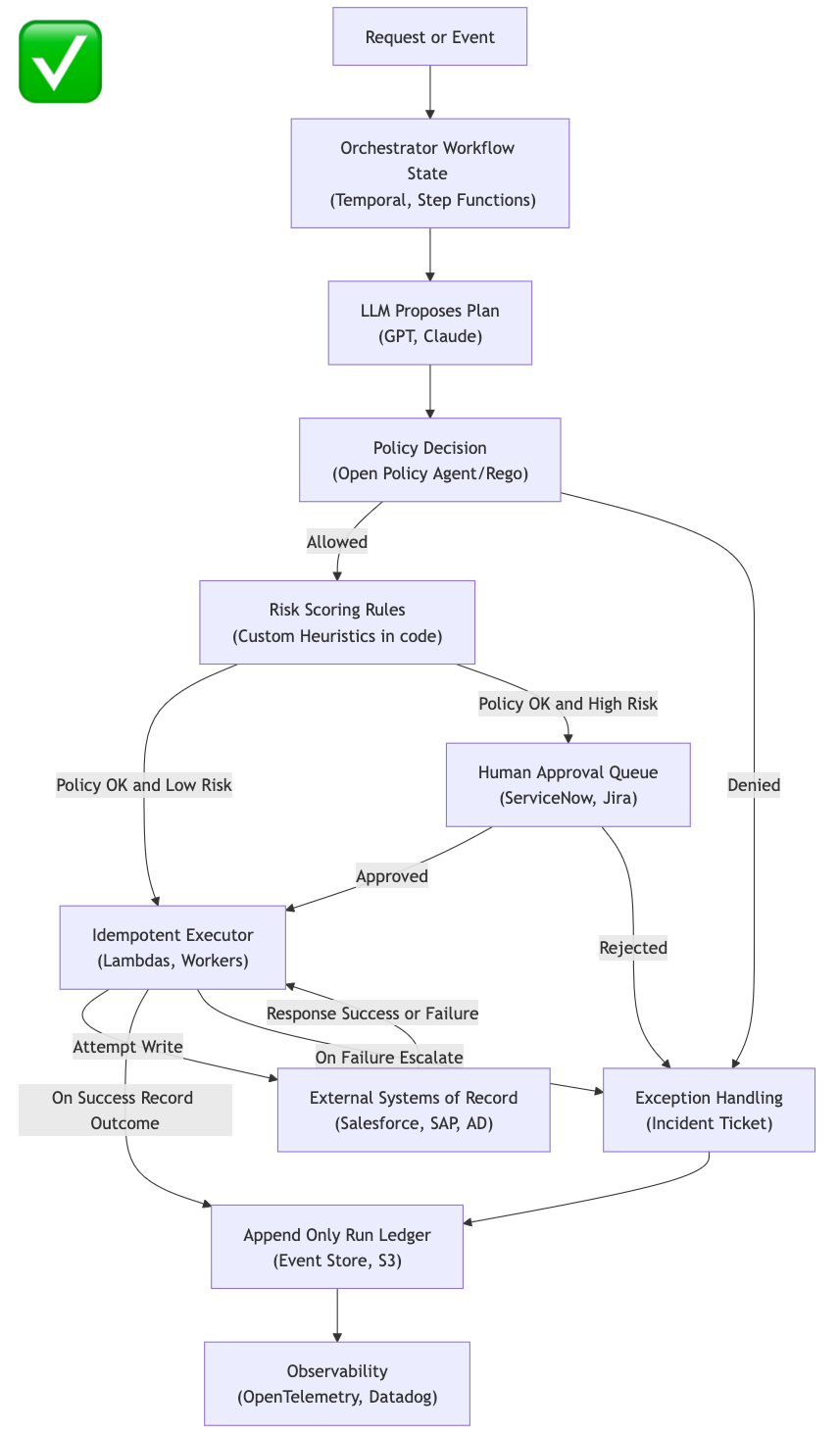

HITL is powerful when deliberately designed into the system. Here are some success modes where humans belong in the loop:

- High-impact, low-frequency decisions

These are rare events with real consequences. For example account termination/lockouts, irreversible financial transactions, fraud detection, etc. This is the inverse of alarm fatigue. - Policy exceptions and ambiguity

Escalation to a human is appropriate when a suggested action falls outside the bounds. “Falling outside the bounds” is caught by proper policy definition and risk scoring in your application, not by the human. This puts the human in the exception path, not in the default logic. - Situational Override

Human users of the system know when the system's technically correct and fully compliant behavior must be stopped, given what is happening outside of the system. The system cannot know when the context has shifted, because that context lives in unfolding events or in the minds of the people running the system that has not made it into the model as captured data. Think active incident responses, audits/legal holds, a high visibility launch, etc.

Humans are terrible at making decisions without context and being real-time filters for high-volume systems. However, humans are fantastic at “lgtm”-ing low-impact decisions! But we shouldn't be designing our systems to arbitrarily need a human as a rubber stamp. If your AI-driven app requires constant human interaction to remain safe, the system will never be safe. Human vigilance is bounded. Attention decays, context is lost, fatigue builds, leading to a human always failing when faced with the entropy of a digital system.

Don't make your coworkers scapegoats. HITL should be as deliberate as possible. HITL should never be used as a crutch for systems that can otherwise define their own policy and heuristics through the old ways:

- Policy engines

- Orchestration layers

- Versioned state

- Controlled executors

Models propose, systems decide.

Humans intervene on purpose.

If you find yourself also thinking about these problems, let's nerd out. Drop me a line anytime at kyle@directiv.ai.